A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science

Sun Tzu's Awesome Tips On Cpu Or Gpu For Inference - World-class cloud from India | High performance cloud infrastructure | E2E Cloud | Alternative to AWS, Azure, and GCP

Inference latency of Inception-v3 for (a) CPU and (b) GPU systems. The... | Download Scientific Diagram

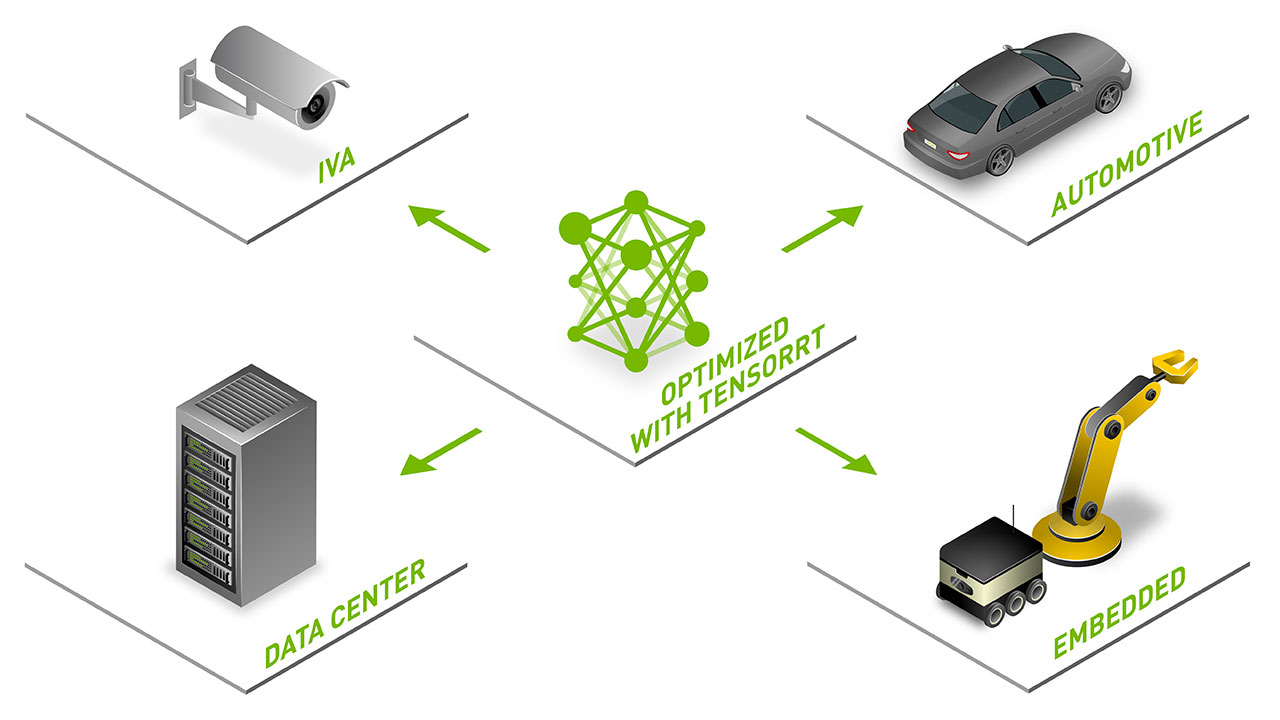

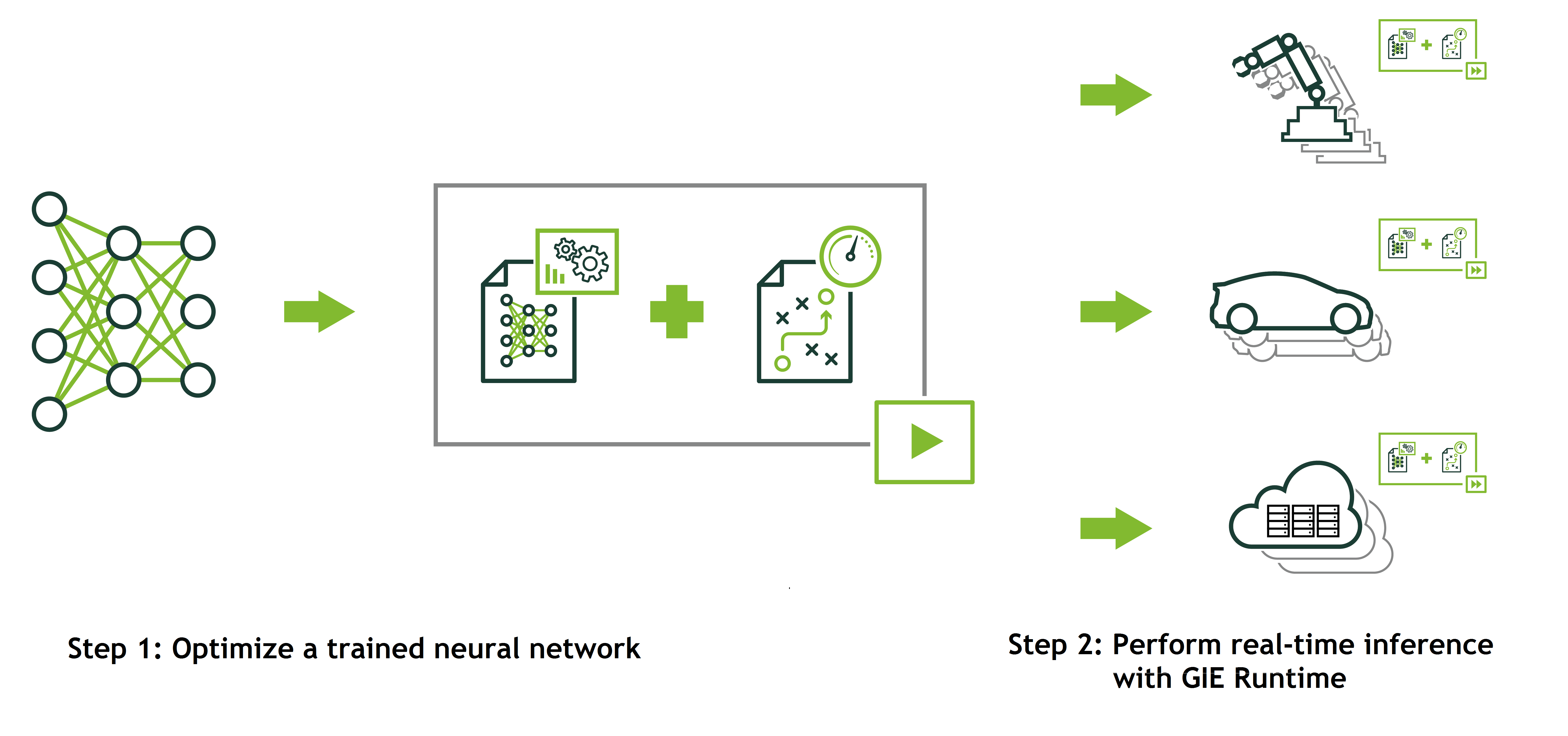

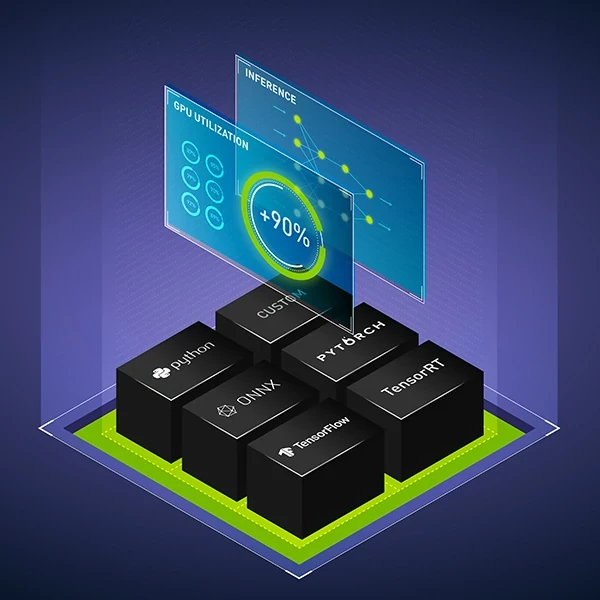

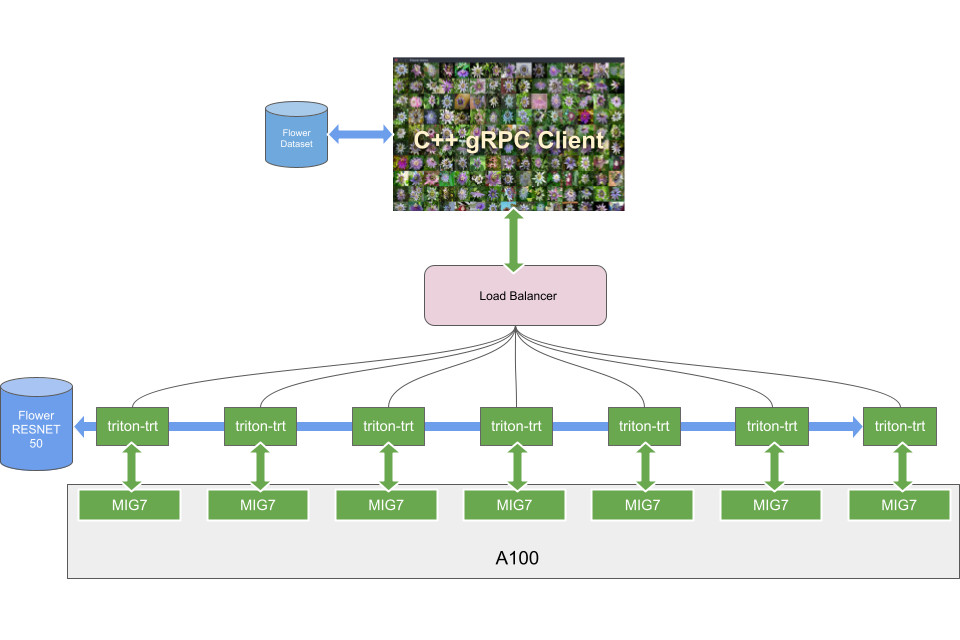

GPU-Accelerated Inference for Kubernetes with the NVIDIA TensorRT Inference Server and Kubeflow | by Ankit Bahuguna | kubeflow | Medium

Mipsology Zebra on Xilinx FPGA Beats GPUs, ASICs for ML Inference Efficiency - Embedded Computing Design

FPGA-based neural network software gives GPUs competition for raw inference speed | Vision Systems Design

NVIDIA AI on Twitter: "Learn how #NVIDIA Triton Inference Server simplifies the deployment of #AI models at scale in production on CPUs or GPUs in our webinar on September 29 at 10am

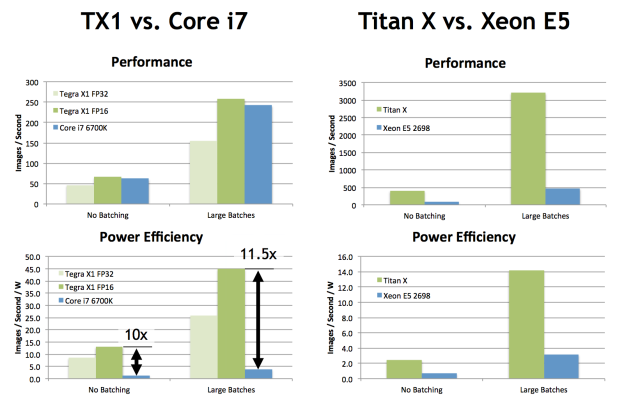

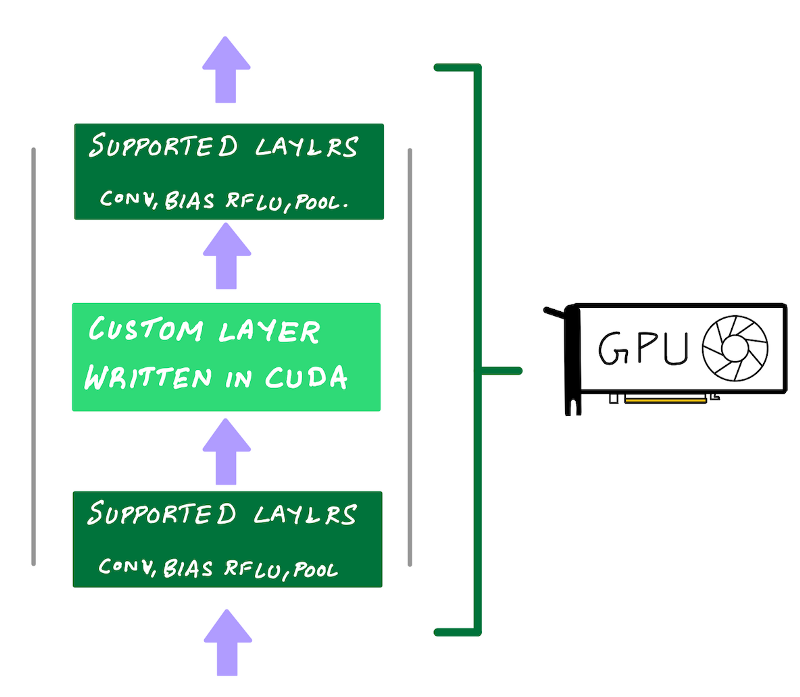

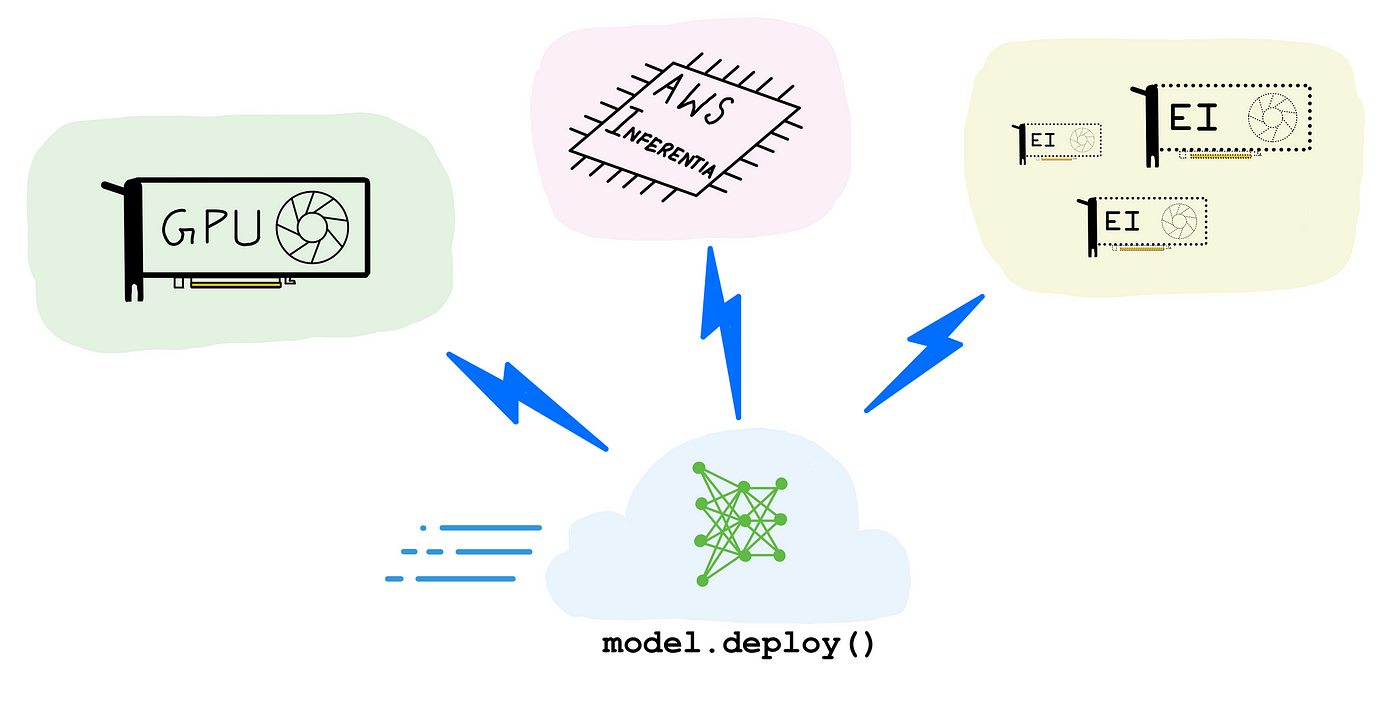

A complete guide to AI accelerators for deep learning inference — GPUs, AWS Inferentia and Amazon Elastic Inference | by Shashank Prasanna | Towards Data Science